Data Intelligence for DataOps

Enterprises have recognised the strategic imperative to use their data for competitive advantage by making it available and consumable across numerous points of use. So, the challenge is how best to deliver the right data to the right people at the right time. This is where the idea of a pipeline for data is of value. A data pipeline is analogous to a normal pipeline, that you will be familiar with, except that it handles data instead of materials. However, an often occurring problem is that data becomes siloed where enterprises have as many data pipelines as they have data analysts, data scientists, and data-hungry applications; each requires their own specialized data sets and data access rights to produce content.

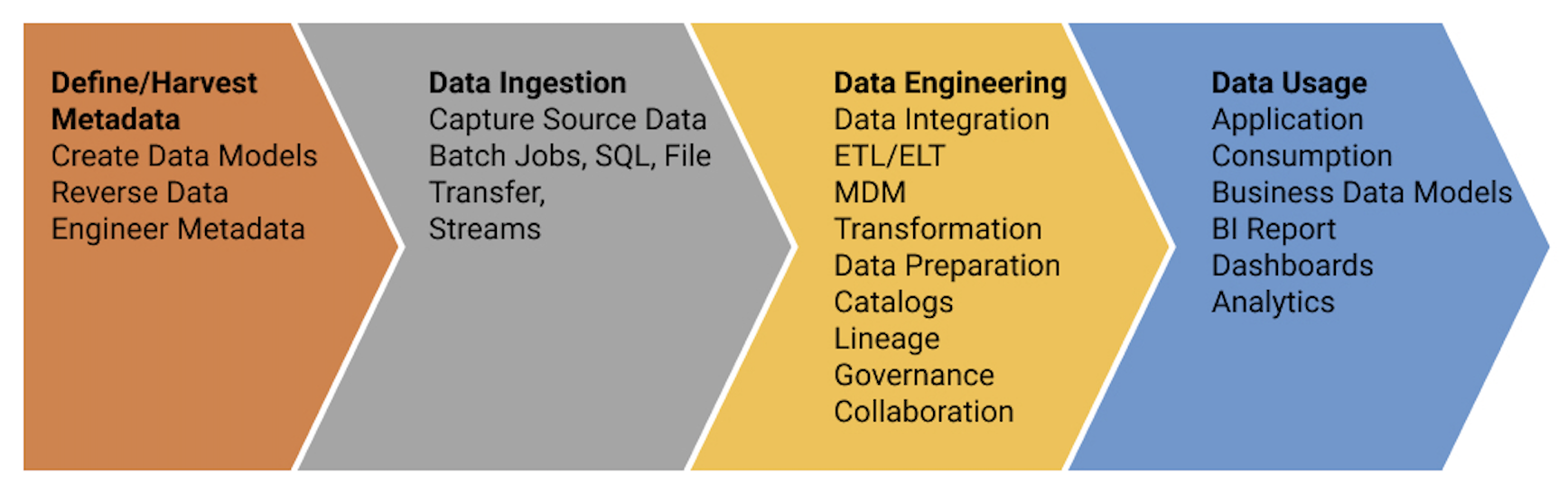

The lifecycle stages of a data pipeline can be described as here:

Given that the data can be managed at each stage, the pipeline can also be subjected to a data governance lifecycle including selection, procurement, transfer, quality assurance warehousing/storage, data management, transformation, monitoring, and distribution.

You may have heard of DevOps (Development + Operations), this is a set of practices that aims to bring operations staff into the software development loop, broadening the scope of the agile approach to the entire operational lifetime of applications and not just restricted to delivering applications.

The inclusion of data in the DevOps lifecycle is increasingly being referred to as DataOps (Data + Operations). This approach builds on the modern principles of agile software engineering and applies rigor to developing, testing, and deploying code that manages data flows and delivers data solutions

The goal is to foster greater collaboration among development, test, operations, and business teams to create a culture of continuous improvement. This data engineering approach is designed for rapid, reliable, and repeatable delivery of production-ready data. Beyond speed and reliability, DataOps enhances and advances data governance through engineering disciplines that support versioning of data, data transformations, and data lineage. DataOps supports operational agility for business operations, with the ability to meet new and changing data needs quickly. It also supports portability and technical operations agility with the ability to rapidly redeploy data pipelines across multiple platforms in on-premises, cloud, multi-cloud, and hybrid data ecosystems.

This is the vision of DataOps; an emerging methodology for building data solutions that deliver business value.

Our recent webinar discussed how erwin solutions can help in the Define/Harvest Metadata and Data Engineering stages of the data pipeline activities.